Sora 2: The Next Leap in AI Video Creation

Artificial Intelligence continues to evolve rapidly, and OpenAI’s Sora 2 is the latest breakthrough that has everyone talking. Sora 2 takes video generation to a new level—transforming simple text prompts into realistic, high-quality videos with synchronized sound, movement, and storytelling.

But what exactly is Sora 2? How does it work? And how can it be used by students, professionals, and businesses? Let’s explore.

What Is Sora 2?

Sora 2 is OpenAI’s second-generation text-to-video model. With just a short sentence, you can generate a detailed video complete with background sounds, character movement, and natural lighting.

For example:

“A small puppy chasing a butterfly in a sunny garden.”

Sora 2 instantly creates a 10-second clip that visually matches your description—with realistic motion, physics, and even sound effects.

Unlike the first version of Sora, which focused mainly on visuals, Sora 2 adds audio generation and better realism. The new version also integrates directly with ChatGPT, allowing you to describe, refine, and edit your videos through conversation.

Sora 2: The Video Model

The first thing I noticed about Sora 2 is that the OpenAI team has finally cracked native audio output—which, until now, was one of Veo 3’s biggest selling points. Sora 2 can generate dialogue, background ambience, and sound effects directly alongside the visuals, without having to stitch anything in afterward.

How Does Sora 2 Work? (Simplified Explanation)

1

Text Understanding:

The model reads and interprets your prompt to understand objects, actions, emotions, and setting.

3

Audio Synchronization

The AI adds ambient sounds, dialogues, and music to match the mood and timing of the visuals.

2

Scene Simulation:

Sora 2 uses deep learning techniques—mainly diffusion models and transformers—to simulate realistic scenes frame by frame.

4

Rendering and Post-Processing:

Finally, it stitches everything together into a seamless video, ready to share or edit.

Examples of Sora 2 in Action

- Education:

A teacher types “The solar system explained with animated planets orbiting the sun.”

→ Sora 2 produces a short video lesson that visually explains planetary motion. - Marketing:

A small business writes “A coffee cup with steam rising and a cozy morning vibe.”

→ Sora 2 generates a video perfect for a café’s social media ad. - Entertainment:

A storyteller says “A dragon flying over snowy mountains at dusk.”

→ The AI creates a cinematic fantasy clip within seconds.

Benefits of Sora 2

|

Category |

Benefits |

Example |

|

Business |

Create professional marketing videos quickly and affordably |

Product promos, brand teasers, and explainer clips |

|

Education |

Turn lessons into engaging visuals for better understanding |

Animated science or history lessons |

|

Content Creation |

Save time and boost creativity |

Storyboards, short films, social reels |

|

Professionals |

Communicate ideas visually without production teams |

Prototype design or presentation visuals |

How Was Sora 2 Built?

Sora 2 is powered by OpenAI’s multimodal architecture, the same family of models that drives GPT-4 and DALL·E.

It combines several advanced systems:

-

- Diffusion models: Transform random noise into detailed images and frames.

- Transformer networks: Maintain consistency and logic between video frames.

- Audio generation models: Produce synchronized sound based on scene context.

- Physics and world modeling: Ensure realistic movement, light, and texture.

The result is a model that not only “sees” but understands how the real world behaves—objects falling, water rippling, people walking naturally, and so on.

OpenAI trained Sora 2 using massive amounts of visual and sound data, ensuring it learns how real environments look and sound. It also includes strict safety measures to avoid misuse and prevent the creation of harmful or misleading content.

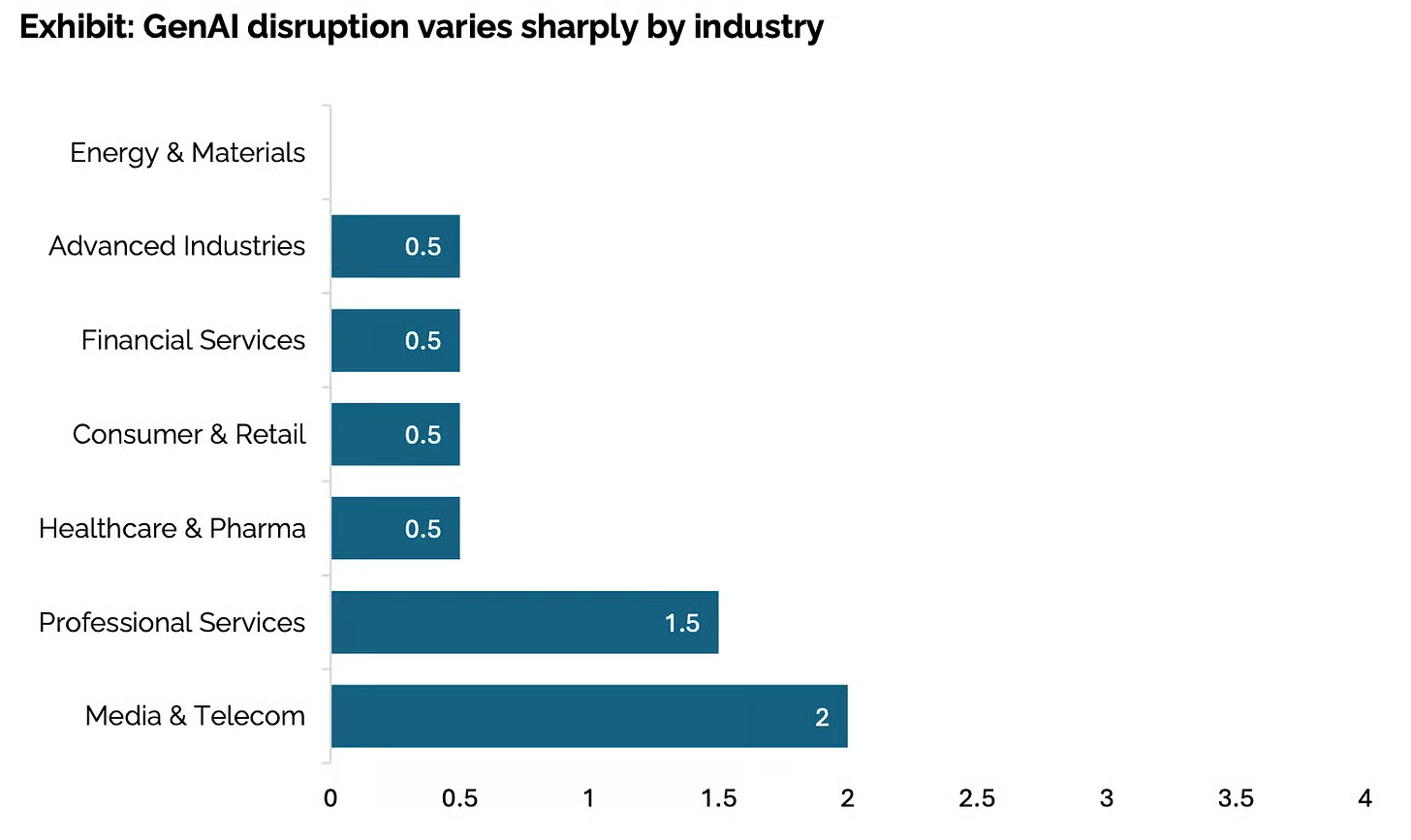

AI and the Media Revolution: Why Video Is Leading the Way

According to a recent Stanford AI report, artificial intelligence hasn’t yet transformed most industries — except one: media. And that exception is not a coincidence.

Across the world, advertising producers and creative teams are rapidly adopting AI tools to cut production costs, speed up video timelines, and simplify what used to be expensive and time-consuming shoots. Instead of managing big crews or renting studios, many now rely on AI-generated content to bring their ideas to life in hours, not weeks.

You can already see this transformation happening online. A quick scroll through LinkedIn, YouTube, or X (Twitter) reveals a growing wave of creators sharing AI-made short films, product ads, and cinematic concept trailers. One great example is the Kalshi ad—a real marketing campaign produced entirely with AI, from visuals to voice-over.

This trend explains why OpenAI, Google, and Meta are all racing to dominate the AI-video space. If there’s one industry where generative AI is already delivering real value, it’s the world of digital media and entertainment. People are actively using, sharing, and paying for AI video tools, and big tech companies are building entire platforms and ecosystems to capture that demand.

Recently, Meta unveiled “Vibes”, a new short-form AI-video feed that mirrors OpenAI’s Sora app—both aiming to make AI-driven media social, interactive, and accessible. The pattern is clear: every major player wants to claim the AI-video frontier before the technology expands into more regulated sectors like finance, healthcare, or law.

In simple terms, AI-generated video is the lowest-hanging fruit in the creative industry, and everyone—from startups to global tech giants—is racing to pick it first.

How Can You Use Sora 2 in Your Daily Life or Work?

1. For Business Owners:

Use Sora 2 to generate ad videos, product demonstrations, or brand stories without hiring a video team.

2. For Students:

Visualize lessons, projects, or scientific concepts with simple text prompts—great for school presentations or online learning.

3. For Professionals:

Designers and marketers can use Sora 2 for brainstorming, storyboarding, and creating quick visual prototypes.

4. For Creators:

Bloggers, YouTubers, and influencers can enhance their storytelling with unique AI-made clips.

Example prompt for marketing:

“A young woman unboxing eco-friendly skincare products in a bright, minimalist studio with soft background music.”

Sora 2 will produce a 10-second, ad-ready clip in seconds.

Why Sora 2 Matters

AI tools like Sora 2 represent a shift from passive viewing to creative participation. Anyone can now direct short videos, experiment with visual storytelling, or generate educational visuals—all from text.

In the long term, Sora 2 and similar tools may redefine how we create films, ads, online lessons, and even personal memories.

Sora 2 is here

Our latest video generation model is more physically accurate, realistic, and more controllable than prior systems. It also features synchronized dialogue and sound effects.

Prompt: a person is standing on 2 horses with legs spread. make it not slowmo also realistic. the guy fell off pretty hard in the end. single shot

Prompt: a man does a backflip on a paddleboard

Prompt: in the style of a japanese anime, a melancholy scene under the fireworks of a night sky. the world is so happy, but not the two star-crossed protagonists of this gorgeous japanese town in the middle of a festival. film-caliber sakuga japanese animation, close-up shots of the characters having a conversation in japanese, beautiful fluid hand-drawn animation

Prompt: an astronaut golden retriever named Sora levitates around an intergalactic pup-themed space station with a tiny jet back that propels him. gorgeous specular lighting and comets fly through the sky, retro-future astro-themed music plays in the background. light glimmers off the dog’s eyes. the dog initially propels towards the space station with the doors opening to let him in. the shot then changes. now inside the space station, many tennis balls are flying around in zero gravity. the dog’s astronaut helmet opens up so he can grab one. 35mm film, the intricate details and texturing of the dog’s hair are clearly visible and the light of the comets shimmers off the fur.

Your questions and answered

1. What is Sora 2?

Sora 2 is OpenAI’s upgraded text-to-video model. It lets you convert a short text description into a video clip, including visuals, motion, and synchronized sound (ambient audio, voices). It improves realism and control over what you see and hear.

7. What are common problems or errors users face?

- “Hmmm something didn’t look right with your request” error when prompt is too complex or server issue.

- Uploading videos fails (greyed out) or format not accepted.

- Access restrictions for new accounts.

More Latest Blog

Best AI Tools for Freelancers and Entrepreneurs in 2026 If you’re a freelancer or small business owner, 2026 is the year AI stops...

Comet Browser (AI Browser) The web is overflowing with tabs, logins, content, and chores. You open one article, then twenty more, copy...

What is a Software Service Company? A software service company is an organization that provides clients with specialized...